The Industry That Powers Everything

How the world ran out of electricity before it ran out of ideas, and why a small precision engineering company in Hyderabad became a quiet bet on the AI age

Note: This is for educational purposes only. The views expressed are the author’s own and should not be construed as investment advice.

As I discussed in my last post, we’ve been walking through the backbone of Artificial Intelligence, one piece at a time. We covered ASML, the Dutch monopoly whose lithography machines are the real definition of monopoly. We covered TSMC, the Taiwanese foundry that makes over 90% of the world’s advanced chips without designing one. We covered Micron, the last surviving American memory maker, born in a dentist’s basement and funded by a potato farmer. And we covered Nvidia, the company that taught sand to think, from a Denny’s booth in East San Jose.

Today, we arrive at the piece that every single one of those stories has been quietly circling around. The piece nobody talks about (until it runs out). The piece that Jensen Huang’s GPUs are useless without, that TSMC’s fabs cannot operate without, that every data centre humming with intelligence requires in staggering, unrelenting quantities.

Electricity!

Not the interesting kind. Just plain, boring, always-on, 24 hours a day, 365 days a year, delivered-right-now electricity. The kind that the grid used to be able to provide without anyone having to think about it.

That kind is becoming very hard to find.

The Hardware Wall

Here is a number worth sitting with. The entire United States, all 330 million people, all its factories and hospitals and skyscrapers and suburban homes, consumes roughly half a terawatt (TW) of electricity at any given moment. Half a terawatt. That is the ceiling of the most powerful economy in the history of human civilisation.

Now here is what is happening on the other side. Nvidia is shipping hundreds of billions of dollars worth of GPUs. Microsoft, Google, Amazon, Meta are committing to data centre capacity expansions that dwarf anything built in the history of computing. A single modern AI data centre, the kind being built to train the next generation of Large Language Models (LLMs), requires somewhere between 100 megawatts and 1 gigawatt of continuous power. That is roughly the output of a nuclear reactor, dedicated to a single building, running without interruption.

The International Energy Agency says data centre electricity consumption could rise by up to 15% within the next four years. By 2030, data centres could account for 12% of America’s total power generation.

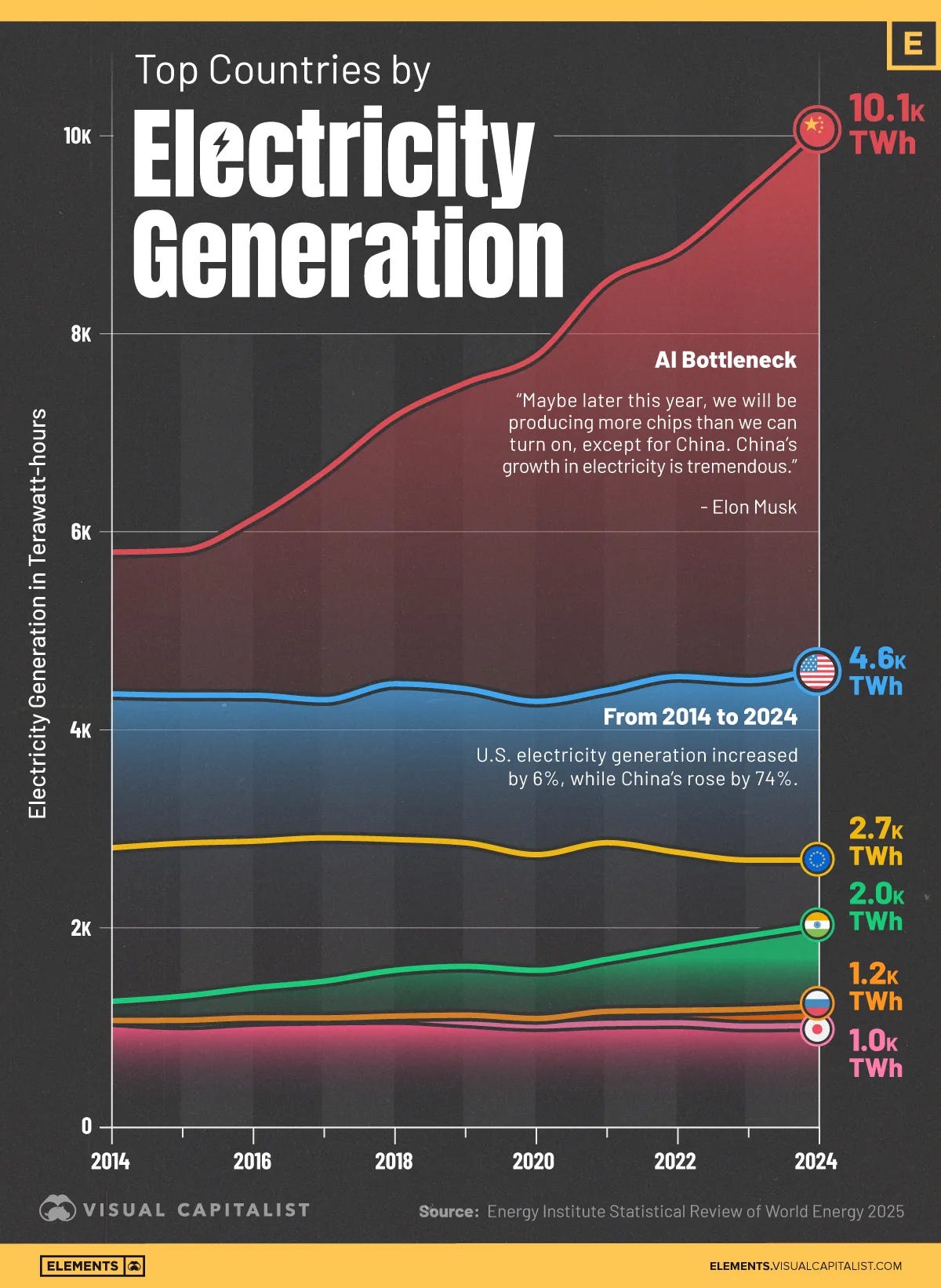

The problem? Outside of China, electricity output has been essentially flat for years. Not growing at 5% or 2% a year. Practically Flat! China has been building power capacity at a furious pace. Everywhere else, the curve looks like a patient’s heartbeat on a hospital monitor right before someone calls the code.

Elon Musk, on Dwarkesh Patel’s podcast a couple of weeks ago, put it in characteristically blunt terms. The output of chips is growing exponentially, he said, but the output of electricity is flat. So how are you going to turn the chips on?

It is a fair question. It might even be the right question. Whether Musk is the right person to be asking it, given that he runs an AI company, a rocket company, and a government efficiency operation simultaneously, and tends to fold every global problem into a narrative that conveniently requires his companies to solve it, is a separate conversation (my take on it below). But the underlying math is hard to argue with.

OpenAI’s CFO Sara Friar made the same point more quietly last year. The real bottleneck, she told CNBC, isn’t money. It’s power.

Why Renewables Can’t Save This (At Least Not Alone, Not Yet)

Before we go further, it is worth addressing the objection you are already forming. Solar and wind. We have more of it every year. Costs have fallen dramatically. Surely the solution is just more panels and turbines?

Here is the problem. AI training runs do not pause at dusk. They do not reduce consumption when clouds roll in. A large language model being trained on a thousand GPUs requires (almost) the same power at 3am on 27th January as it does at noon on 12th July. It requires it continuously, without interruption, for weeks or months.

Wind and solar are intermittent. Batteries, at today’s cost and density, cannot provide the kind of deep storage that would allow renewable power to substitute for baseload generation at data centre scale. You can build a battery system that carries a data centre through a two-hour cloudy afternoon. You cannot, at any reasonable cost, build one that carries it through three days of calm winters or heavy monsoon.

Musk, on the same podcast, waved this away. He pointed out that one terawatt of solar, at a 25% capacity factor, would need only about 1% of U.S. land area. Dwarkesh pressed him. So what’s the problem? Musk’s answer was revealing. He said it’s pretty hard to cover Nevada in solar panels when you have to get permits for it.

He’s not wrong about permitting. The regulatory timeline for a utility-scale solar installation can stretch past five years. But there is something a little convenient about the richest man in the world, who also happens to own a rocket company, framing the entire problem as a regulatory failure on Earth. Because his proposed solution, naturally, is to put the data centres in space.

But when someone tells you the solution to a terrestrial engineering problem is orbital data centres running on space-based solar, and they happen to own SpaceX, it’s worth pausing. Dwarkesh himself pushed back sensibly, noting that energy accounts for only 10 to 15% of total data centre cost, and that GPUs in space cannot be serviced when they fail. The depreciation cycle would be brutal. Musk was undeterred. He predicted that space will be the cheapest place for AI within 36 months, maybe 30.

Manifold Markets, for what it’s worth, gave that prediction about a 19% chance of being right.

I bring up Musk not to dismiss his observations about the energy crunch, which are directionally correct, but to flag something broader. When the world’s most prominent technologist says the answer to power scarcity is to leave Earth, it tells you two things. First, the problem is genuinely severe. Second, some of the people describing it have a financial interest in certain solutions being adopted. Filter accordingly.

The SMR Promise (And the Evidence Against It)

Let’s talk about the solutions actually being proposed.

The first and most discussed is nuclear. Specifically, small modular reactors, or SMRs. These are compact nuclear plants, typically under 300 MW, that proponents claim can be factory-built, shipped in modules, and deployed faster and cheaper than traditional gigawatt-scale nuclear plants.

The pitch is elegant. Nuclear runs 24/7, emits no carbon, has a small physical footprint relative to its output, and produces reliable baseload power. Microsoft signed a deal to restart a reactor at Three Mile Island. Amazon invested in multiple SMR developers. Google and others have expressed interest. The hype has been extraordinary.

Now here is the part that doesn’t make it into the pitch decks.

According to JP Morgan’s 2025 energy report, there are exactly three operating SMRs in the world. One in China. Two in Russia. One additional unit is under construction in Argentina. All of them had projected construction timelines of three to four years. All of them took twelve years or more to complete. Argentina’s has been going for twelve years and counting. The cost overruns are staggering. Argentina’s project has overrun by 700%. China’s by 300%. Russia’s by 400%.

These are supposed to be the proof of concept.

And it gets more interesting with the US!

In the United States, the most advanced SMR project was NuScale’s Carbon Free Power Project with the Utah Associated Municipal Power Systems. NuScale was the only company with an SMR design approved by the U.S. Nuclear Regulatory Commission. The project was supposed to build six reactors in Idaho, producing 462 MW, coming online in 2029.

The original cost estimate was $5.3 billion. By 2023, it had climbed to $9.3 billion. The target power price went from $55 per megawatt-hour to $89, even after including a $1.4 billion subsidy from the Department of Energy and $30 per MWh in tax credits from the Inflation Reduction Act. Without those subsidies, the price would have been closer to $120 per MWh.

One by one, the subscribing utilities dropped out. Small municipal power systems in Utah could not afford to be locked into a project with billion-dollar cost overruns. In November 2023, NuScale and UAMPS mutually agreed to terminate the project. NuScale’s CEO told a conference call that once you’re on a dead horse, you dismount quickly.

An academic study of 180 nuclear projects worldwide found that 175 of them exceeded their initial budget by an average of 117%. They took, on average, 64% longer than projected. And these were large conventional reactors, the kind the industry has been building for half a century. SMRs, which are newer and less proven, face the additional disadvantage of not benefiting from economies of scale. A 300 MW reactor does not cost one-third of a 1,000 MW reactor. The civil works, the regulatory overhead, the staffing, the safety systems, much of it scales poorly downward. Per-megawatt costs for SMRs are much higher than for large reactors, which themselves are already uncompetitive with renewables on cost.

A December 2025 analysis from Beyond Nuclear International noted that with hundreds of competing SMR designs chasing a finite, niche market, and no demonstration of serial factory production anywhere in the world, the SMR bubble may burst before the end of this decade.

Now, am I saying nuclear has no role in the energy future? No. Am I saying that the current SMR hype, driven partly by genuine need and partly by the desire of tech companies to appear to be solving the power problem, is running well ahead of engineering reality? Absolutely!!! Even if everything goes right for SMR developers from today, you are looking at the early 2030s before any meaningful capacity comes online. For a data centre developer who needs power in 12 months, that is not a solution. It’s actually nothing more than a press release.

The Gas Turbine Crunch

There is a pragmatic solution that everyone reaches for when renewables fall short and the grid cannot respond fast enough: natural gas turbines. They burn gas, they produce electricity reliably around the clock, they can be sited near data centres, and they scale.

The problem is that every data centre builder in the world has reached for this solution simultaneously.

GE Vernova, the largest gas turbine manufacturer, ended 2025 with an 83 GW backlog of contracted gas turbines. CEO Scott Strazik said he expects turbine reservations to be sold out through 2030 by the end of 2026. Siemens Energy is ramping production from about 48 heavy-duty turbines per year to 70 or 80 by 2026 and has invested $1 billion in U.S. manufacturing expansion. Mitsubishi Heavy has most slots booked for 2027 and 2028. Together, these three companies supply roughly 90% of global gas turbine demand over the past decade.

Wait times have stretched to as much as seven years in some cases. You can place an order today and receive delivery after a decade. For a company trying to bring a data centre online in 12-18 months, a 2030 turbine delivery date is a super polite way of saying no.

About a third of GE Vernova’s turbine reservations are tied directly or indirectly to AI and hyperscalers. NRG Energy signed a deal for up to 5.4 GW of gas-fired plants between 2029 and 2032. The company called it a “demand supercycle.” The numbers are real, and they are historically unusual.

One interesting Indian company worth noting briefly in this context is TD Power Systems, a Bangalore-based manufacturer of AC generators that couple to gas turbines, steam turbines, hydro turbines, and wind turbines. TDPS makes generators in the 1 MW to 200 MW range, exporting to over 110 countries, and holds a Siemens license for high-speed 2-pole generators up to 250 MVA. With about 70% of order inflows now from exports, TDPS sits at the electrical end of the power generation value chain, converting mechanical energy to electrical output across multiple fuel types. It’s a small company, just approaching $1.5bn in market cap, but structurally positioned to benefit from the turbine buildout cycle globally. Not a recommendation. Just worth knowing it exists.

The Ceramic Box That Showed Up at the Right Time

So: renewables can’t run 24/7, the grid is years behind, gas turbines are booked out past the end of this decade, and SMRs are a decade away at best.

Is there anything that can actually deliver power to a data centre now?

This is where Bloom Energy enters the conversation. And Bloom’s story is worth understanding because it connects directly to an Indian company I want to spend time on later.

Bloom Energy was founded in 2001 by Dr. KR Sridhar, an aerospace engineer who had spent years at NASA working on technology to convert carbon dioxide into oxygen on Mars. When the Mars program lost funding, Sridhar reversed the process. Instead of consuming electricity to produce gases, he built a system that consumes gas to produce electricity. Solid oxide fuel cells (SOFC). Flat ceramic plates that run an electrochemical reaction, converting natural gas (or biogas, or eventually hydrogen) directly into electricity at about 60% efficiency (compared with 35 to 45% for a gas turbine). No combustion. No moving parts. Temperatures above 800 degrees Celsius, which sounds alarming but is actually what makes the chemistry efficient.

For twenty years, Bloom was a well-regarded but commercially modest clean energy company. It had some good customers, including Google and Walmart. It went public in 2018 at $15 a share. It stayed nearly flat for years. Most people had forgotten it existed.

Then the AI power crisis arrived, and Bloom’s product turned out to be exactly what data centre developers were looking for.

Here is why. A Bloom fuel cell installation is modular: you can deploy 10 MW this quarter and another 50 MW next year without rebuilding anything. It runs silently, at about 65 decibels from ten feet away, suitable for urban and suburban sites. It can be deployed behind the meter, off-grid, bypassing the interconnect queue entirely. And critically, it can go from order to power-on in 90 to 120 days.

In a world where the utility says the interconnect study takes a year and the turbine manufacturer says 2030, ninety days is extraordinary.

In 2025, the deals started arriving fast. Oracle signed up for on-site fuel cell power at AI data centres, deployed within 90 days. Equinix signed across 19 data centres with over 100 MW of capacity. American Electric Power announced a $2.65 billion deal for solid oxide fuel cells. Brookfield committed $5 billion to deploy Bloom’s technology at AI factories globally.

Bloom’s stock went from around $20 in early 2025 to a surge of nearly 400% over the year. Another 70%+ in January 2026 alone. Revenue for 2025 is around $2.0 billion, with analysts projecting $3.0 billion+ in 2026. The company is doubling manufacturing capacity from 1 GW to 2 GW per year by end of 2026.

It’s worth noting the risks. Bloom is still unprofitable on a trailing twelve-month basis. Its fuel cells predominantly run on natural gas, not hydrogen, which scuppered an earlier Amazon deal on environmental grounds. The forward P/E is well over 100x. It appears that the market is pricing in five-year perfection. And GE Vernova’s CEO has publicly downplayed fuel cells, arguing that per-MW costs remain higher than gas turbines for baseload applications in the long run. That may well be true. But GE Vernova doesn’t have turbines available for five years, and Bloom can deliver in 90 days. Urgency has its own way of reshaping economics.

The Hotbox and the Company Nobody Has Heard Of

Now here is the question that most people who followed Bloom’s stock run did not think to ask: where do the boxes come from?

A Bloom Energy Server is essentially a stack of flat ceramic plates held in a steel enclosure. The ceramic plates do the electrochemical work. The steel enclosure, engineered to precise tolerances, maintaining exact temperatures and pressures for the reaction to proceed reliably, is called the hotbox. Operating temperature exceeds 800 degrees Celsius. Tolerances are measured in microns. The manufacturing required is closer to aerospace or nuclear work than to conventional metalworking.

For over a decade, Bloom has sourced its hotboxes from an Indian primary supplier.

That supplier is MTAR Technologies, a precision engineering company founded in 1970 in Hyderabad. A company that many people may never have heard of.

Hyderabad, 1970

To understand MTAR, you need to understand the India it was born into.

In 1970, India was living under the shadow of technology sanctions. After its nuclear ambitions became clear, Western governments restricted exports of precision engineering equipment and components. The Indian government needed domestic capabilities for its nuclear reactors, its space rockets, and its defence programmes. It could no longer import them.

MTAR was founded to fill that gap. The company built itself around serving ISRO (India’s space agency), DRDO (its defence research organisation), and NPCIL (its nuclear power corporation). These are not casual clients. They require manufacturing tolerances of 5 to 10 microns, about one-tenth the width of a human hair. They require repeatability across thousands of components. They require documentation and quality control rigorous enough to satisfy the most demanding regulatory bodies on earth.

This is the heritage. Fifty years of building liquid propulsion engines for ISRO, fuel machining heads for nuclear reactors, actuators for the Tejas fighter aircraft, cryogenic engine components for space launch vehicles. Nine manufacturing units in Hyderabad, all within a few kilometres of India’s defence and space research institutions.

When Bloom Energy needed someone to manufacture its hotboxes, it was not looking for a company that could make something roughly the right shape at roughly the right temperature. It was looking for a company with decades of documented precision manufacturing for mission-critical applications where failure means catastrophe. MTAR had exactly that.

The Numbers (And What They Tell You)

MTAR is now Bloom’s primary hotbox supplier, currently meeting 50 to 60% of Bloom’s requirements, and the sole supplier for Bloom’s electrolyser units. Clean energy, predominantly Bloom, represented about 70% of MTAR’s revenue in the first nine months of FY26.

Let that settle for a moment. MTAR is, in a meaningful financial sense, substantially a bet on Bloom Energy. And Bloom Energy is, in an increasingly meaningful sense, a bet on the AI power crisis.

The recent numbers are striking. Q3 FY26 was MTAR’s strongest quarter ever. Revenue hit ~$30.9mn, up ~60% year-on-year. EBITDA surged 92.5% to ~$7.1mn. Net profit more than doubled to ~$3.8mn. The order book as of December 2025 stood at Rs ~$266mn, with ~$152mn of fresh orders received in Q3 alone (~$71.6mn came from the clean energy fuel cell segment).

MTAR is expanding capacity for solid oxide fuel cell manufacturing from 8,000 units to 12,000 by end of FY26, then 20,000 by end of FY27, with facilities to support 30,000 units thereafter. The company guided for ~$100mn in revenue for FY26 and roughly 50% revenue growth in FY27. A new SEZ plant near Hyderabad airport is being set up specifically for Bloom operations.

The aerospace and defence business is growing separately, ~$8mn in revenue through the first nine months of FY26 and a stated vision of ~$40-45mn from this segment within three years. MTAR has begun batch production for GKN Aerospace, Rafael, Elbit, and Thales, and received orders ~$55mn for Kaiga nuclear reactor units 5 and 6.

But There Is More Than Just Bloom (And That Matters)

What makes MTAR structurally interesting, beyond the Bloom relationship, is the breadth of end markets it serves and the difficulty of replicating what it does.

India has ambitious nuclear expansion plans. The government has targeted 100 GW of nuclear capacity by 2047, up from roughly 8 GW today. That’s a twelve-fold increase over two decades. MTAR manufactures fuel machining heads, drive mechanisms, bridge assemblies, and coolant channel assemblies for pressurised heavy water reactors. The qualification process for nuclear components takes years. You do not switch suppliers for reactor internals because someone quotes a lower price. The institutional trust, the regulatory certifications, the documentation trail, these take decades to build.

Similarly, MTAR supplies cryogenic engine components and electro-pneumatic modules to ISRO. As India’s space ambitions expand, as commercial satellite launches grow, as the private space ecosystem develops, MTAR’s capabilities are positioned to benefit.

The defence segment, with orders from entities like IAI, Weatherford, and domestic programmes like the Tejas, is still relatively small but growing. India’s defence indigenisation drive, the push to replace imports with domestic manufacturing, creates structural demand for the kind of precision engineering MTAR provides.

This diversification matters because it reduces, somewhat, the concentration risk around Bloom. If Bloom stumbles, MTAR has other segments to lean on. They won’t replace the Bloom revenue overnight, but they provide a floor that doesn’t exist for a pure single-customer play.

The Honest Conversation About Valuation

And now the part that requires honesty. Because any write-up that tells you about a company’s wonderful positioning without telling you what the market is charging for that positioning is selling you something. And that is not my intention.

MTAR Technologies, just like Bloom, is not cheap by any measure. The stock has risen from Rs 1,155 to around Rs 3,500 to 3,700 at the time of writing, a gain of roughly 200% in under a year. Market capitalisation is approximately $1.25bn.

A company earning roughly ~$7.2mn in TTM profits is valued at over $1.25bn. By any classical valuation framework, Ben Graham would not touch this with a ten-foot pole. And he’d probably be right to be cautious.

The bull case is that the trailing earnings are a poor reflection of the trajectory. Revenue is growing at 50% or more. The order book provides strong visibility. The Bloom relationship is deepening, with higher volumes and new products. Nuclear orders are building. If revenue continues compounding at 30 to 50% annually and margins expand as the business scales, you can construct a scenario where the current market cap looks reasonable two or three years from now.

The bear case is equally straightforward. MTAR appears to be priced to perfection. There have been quarters where revenue dropped significantly, including Q2 FY26 when sales fell 28% year-on-year due to timing. The company is heavily concentrated on Bloom, which itself is unprofitable and priced for perfection. If Bloom’s growth disappoints, or if a competitor emerges who can manufacture hotboxes more cheaply, or if the AI data centre buildout slows, the earnings base that justifies this valuation simply does not exist yet.

I do not have a strong view on which scenario plays out. What I do think is that MTAR’s position in the supply chain is real, its engineering capabilities are genuine, and its customer relationships are decades in the making. Whether the stock at today’s price adequately compensates you for the execution risk is a question each investor must answer for themselves.

I may cover Bloom and MTAR in more depth in a future post. For now, what matters is understanding where they sit in the broader picture.

Connecting the Dots

Let me pull back, because the point of this series has never been about commenting on the valuation of individual stocks. It has been understanding the architecture of industries, and the supply chains that hold it together.

We started with ASML, TSMC and Micron. We then covered Nvidia, whose GPUs have become the infrastructure of intelligence. The company that spent a decade being laughed at for investing in CUDA, and is now worth over $4.5 trillion.

And now we arrive at power. At the recognition that none of these things matter if you cannot turn them on. That the chips being shipped from Taiwan, the memory being produced in Boise, the data centres being built across Virginia and Texas, all require electrons in quantities that the world’s electrical infrastructure was not designed to provide.

For those who have read the earlier posts, the connections should now be visible. Nvidia’s next-generation chips need TSMC’s advanced nodes, which need ASML’s EUV machines, which need extreme precision. Those chips need Micron’s HBM. And the data centres that house all of it need power. Constant, reliable, massive quantities of power.

For those who haven’t read the earlier posts, everything you need is right here. But the full supply chain picture, if you’re interested, is worth assembling.

The companies solving the power problem are diverse. Some are building gas turbines with multi-year backlogs. Some are proposing nuclear reactors that may or may not materialise this decade. Some are building solar and wind that can supplement but not yet replace baseload generation. And Bloom Energy, with its ceramic fuel cells and its 90-day deployments, is building something that can plug into a data centre now and provide reliable, off-grid power from natural gas today and potentially hydrogen tomorrow.

In Hyderabad, a company that has been manufacturing precision components for India’s nuclear reactors and space rockets for fifty years is quietly manufacturing the hotboxes that make Bloom’s system work. Not because MTAR set out to be in the AI supply chain. Because precision manufacturing is precision manufacturing, and the tolerances required for fuel cells operating at 800 degrees Celsius happen to be the same tolerances required for rocket engines and nuclear reactor components.

The interesting supply chains tend to run through quiet, highly specialised companies that do one thing extraordinarily well. ASML was a Dutch precision engineering company almost nobody had heard of until it became impossible to ignore. TSMC was a Taiwanese contract manufacturer that sophisticated investors overlooked for years because it didn’t design anything.

Bloom and MTAR may or may not follow that pattern. The engineering position is real. The valuation is debatable. The story is still being written.

What is not debatable is that the power crisis is not a temporary bottleneck. It is a structural constraint that will shape the development of artificial intelligence for the next decade. And the supply chains feeding the infrastructure being built to address it run through places and companies that most people are not looking at.

From the lithography machines in Veldhoven to the foundry floors in Hsinchu to the memory plants in Boise to the GPU farms of Silicon Valley, and onward to the precision engineering workshops of Hyderabad and the generator factories of Bangalore.

The machines that hold the world, as I called them in an earlier post, are powered by electrons. And the electrons are becoming the bottleneck.

Note: This is for educational purposes only and should not be construed as investment advice. The author may or may not hold positions in the companies discussed.

This is the fifth piece in a series exploring the semiconductor and AI infrastructure supply chain. Previous posts covered ASML, TSMC, Micron, and Nvidia. Subscribe to follow along!

Excellent read. Liked the way you covered all aspects.

This sector has been on my radar for a while, really insightful piece!